Proposal 11 for ICANN: Become Agile, Adaptive, and Responsive by "Embracing Evidence"

19 February 2014

This is the eleventh of a series of 16 draft proposals developed by the ICANN Strategy Panel on Multistakeholder Innovation in conjunction with the Governance Lab @ NYU for how to design an effective, legitimate and evolving 21st century Internet Corporation for Assigned Names & Numbers (ICANN).

Please share your comments/reactions/questions on this proposal in the comments section of this post or via the line-by-line annotation plug-in.

From Principle to Practice

The stability of the global Internet depends on the efficacy of ICANN’s working processes, which itself depends on stakeholder awareness, engagement and participation. As the Internet impacts all aspects of life all across the globe, it makes sense that the evidence and data that support and inform ICANN’s decisions are themselves highly diverse.

To manage the “global public resources” that are the Internet’s unique identifier resources, ICANN must be able to respond to changes in the Internet governance system and to changes in the social, economic, and technical circumstances in which the Internet is everywhere embedded. Organizations evolve by learning, done through the uses of quantitative and qualitative methods for rigorous assessment to figure out what works and in order to change what doesn’t. Therefore, ICANN should develop the institutional capacity – in the form of a research unit, research department, or research function – as well as a systematic approach to monitor, evaluate, learn from, and use evidence more effectively in ICANN’s decision-making practices.

ICANN must employ methods for embracing evidence that are robust, unbiased, and appropriate for the types of questions being asked. In moving from “faith-based” to “evidence-based” decision making, ICANN must be certain to avoid “decision-based evidence making.”

What Does it Mean to “Embrace Evidence”?

ICANN should use evidence in all aspects of its work. This includes its operations and administration, as well as its policy-development work, domain name system services, outreach and engagement, and strategic and budget planning. Different kinds of evidence may require different analytic frameworks with different challenges and concerns. Different stakeholders may have different criteria – both quantitative and qualitative – for determining if a program is successful.

In particular, because there is such variety to the types of information that are relevant to ICANN’s work, and because ICANN must respect its “public interest commitments,” it makes sense to take a meta-ethnographic approach to research. This is a way to systematize research, where research should combine data from different sources and “translate concepts and metaphors across studies.”[1] Research efforts should determine what they are looking for in advance. ICANN’s research must be “interpretive,” in that it should be comparative and grounded in normative frameworks with a view toward espoused principles. It should also be “adaptive,” in that research should inform an iterative approach to change through decision-making (for example, through the use of Randomised Controlled Trials (RCTs)[2]), which in turn should inform the research approach.

To be able to measure success or make “improvements” to a process, ICANN must first develop metrics & indicators within a framework of evaluation. Metrics can be thought of as units of measurement (such as return on investment). An indicator is “a metric tied to one or more targets,”[3] such as gross domestic product. Indicators build on outputs, which are basic metrics of success in quantitative terms, such as number of trainings delivered by a service, or number of people participating in a program. Because metrics and indicators exist within a framework of evaluation, ICANN should consult with stakeholders in developing this framework and should do so with an understanding that removing the focus on values will be impossible. For ICANN to “embrace evidence,” then, means developing a mechanism to be held accountable to the established and articulated values of its various stakeholders.

In order to do so, ICANN should convene research efforts through an institutional assessment function (or “Research Unit”). This unit would serve as a facilitator of internal and cross-community research efforts (e.g., research-gathering), and also create and maintain an evidence database. It would be tasked with linking the supply and demand of evidence. The proposed Research Unit is conceived as a cross-community resource – it should be able to inform decision-making in various ICANN contexts, and provide useful materials to people who want to learn about ICANN.

Why Does This Proposal Make Sense at ICANN?

If we take the term “governance” to mean “how institutions analyze information and make decisions to solve collective problems,”[9] then ICANN most certainly is in the business of governance.

The Internet governance ecosystem in which ICANN operates is constantly changing as a result of technological innovation and new applications for technologies. This means that for ICANN to do its work effectively, it must be able to respond to change, and this means that ICANN must be able to leverage available information to “understand what is going on, what to embrace and what to avoid.”[10] In particular, ICANN needs to use evidence from practice to understand “what works.”[11]

ICANN’s work demands “learning while doing, and continuous in course adjustments, based of course on measurement and evaluation.” Furthermore, ICANN must respect its “public interest commitment” in how it executes it’s work. This means ICANN has an obligation to use relevant research findings to inform its decision-making processes and the decisions it makes, and especially to find data and information relevant to those affected by ICANN’s decisions. It also means that ICANN has an obligation to involve stakeholders in evidence-collection and the research process. In systematically conducting research, ICANN would not only discover what is known (and how it is known) but also what is not known (and how to know it) in order to “inform decisions about what further research might best be undertaken, thereby creating a virtuous cycle.”[12] ICANN could accomplish this through the creation of a Research Unit.

Notably, this unit should not have the power to make binding decisions at ICANN. Essentially, the purpose of the unit is to create a space where researchers and research initiatives can convene, and also to provide support to the volunteers that work together via ICANN, who largely do not have the time or resources to produce their own research (this is especially a concern as ICANN often faces issues that are new and therefore require extensive research).

Implementation Within ICANN

To establish an assessment function or Research Unit at ICANN, creating a research process that embraces different focus areas combined with a general process-guideline[4] will be useful. For example, such a process might include the following overarching steps:

- Establish an evaluative framework that is based in a concept or theory of change[5] in order to develop a research approach and initial agenda.

- Monitor and collect relevant evidence and synthesize this evidence to create an “evidence base.”

- Evaluate and rank projects and initiatives for how effective and/or cost-effective they were.

- Show relative cost and impact of different projects and initiatives.

- “Translate” the evidence into useful materials that are relevant to the needs and interests of ICANN’s stakeholders.

- Absorb the evidence by publishing and sharing findings in understandable, meaningful and actionable formats.

- Identify gaps in research and in research capabilities.

- Promote good evidence and advise others (e.g., other researchers, or the ICANN stakeholders for whom the research is intended) on how the evidence can be used.

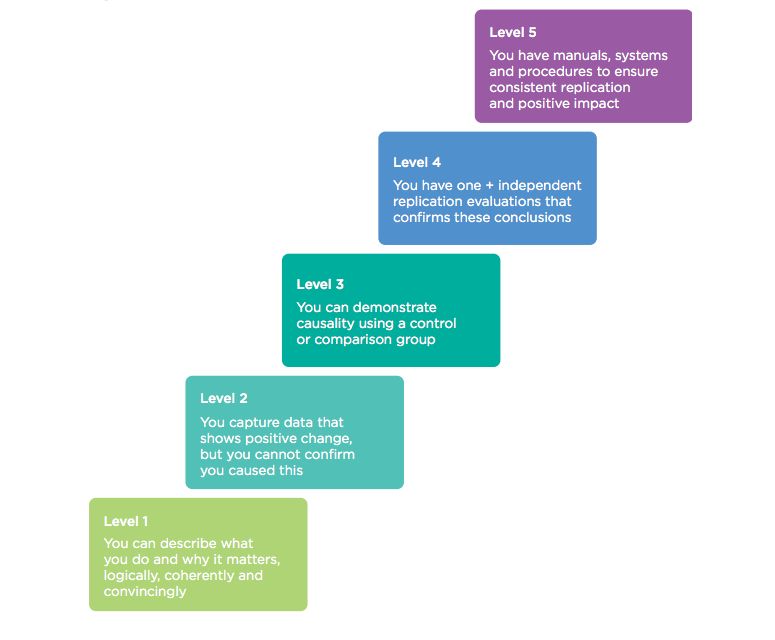

As an example, the following are NESTA’s (the U.K. National Endowment for Science, Technology, and the Arts) “standards of evidence” – i.e., a stacked approach to defining good evidence, and how to be rigorous in using and talking about good evidence:

Deploying any similar process via a Research Unit would involve aggregating and evaluating different kinds of evidence, which have different considerations where it comes to best-use. ICANN should take a deliberately inclusive view in defining “useful evidence.”[6] In determining types of useful evidence, the following are some large “buckets” of kinds of evidence that might be useful for ICANN. The list is meant to be illustrative (of the versatility of how different kinds of evidence can be useful in different ways), and not exhaustive:

- Big Data

- “Big data” is not a “type” of data per se, as it is an expression of the relative size of the data that needs to be processed (that is, it is a reflection on the relative ability of computing programs to analyze the data). Big data involves a massive amount of raw data that, when analyzed and put to use, can lead to new insights on everything from public opinion to operational concerns. Big data can be subjected to “multi-level linear modeling,”[7] where variables can be “stacked” within different categories simultaneously, creating complex hierarchies of variables that can introduce dependencies within the data that would not be predictable using single-layer analysis.

- The burgeoning literature on Big Data argues that it generates value by: creating transparency; enabling experimentation to discover needs, exposing variability and improving performance; segmenting populations to customize actions; replacing/supporting human decision making with automated algorithms; and innovating new business models, products and services.[8] The insights drawn from data analysis can also be visualized in a manner that passes along relevant information, even to those without the tech savvy to understand the data on its own terms (see GovLab Selected Readings on Data Visualization).

- Policy-Development Data

- At ICANN, this would include engagement and participation metrics. Research in policy-development should establish benchmarks for current practices to determine how different interventions have different impact. In particular, there should be a focus on how different levels and types of stakeholder engagement affect policy outcomes. Policy-development at ICANN would obviously also include registry and registrar data, contractual compliance data, and domain name services and operations data. In order to determine the impact (for example, economic) of ICANN policies, it makes sense to layer ICANN data with regional data. Notably, any efforts to define “public interest” could harness survey data (subjected, in particular, to discourse, content, and textual analysis), which could also be included within this section.

- Sentiment Analysis

- Sentiment analysis (or opinion polling) is the analysis of the kinds of data that comes out of social media – a treasure-trove of information for any institution that must pay attention to its stakeholders’ opinions. Through new tools and the embrace of data science experts, ICANN could establish a means to identify and embrace insights from social information produced by its community and the wider public. Leveraging meta-data, keywords, phrases, and tags in data may also help ICANN find meaning in this evidence. One means for ICANN to experiment with sentiment analysis could involve asking people to use hashtags in their social media activities, which can be tracked to the end of analyzing relationships between hashtags to reveal new insights.

One specific pilot idea could build on the work underway within the Generic Names Supporting Organization (GNSO), which has recently chartered a Working Group – the “GNSO Metrics and Reporting Working Group” – which is meant to “further research metrics and reporting needs in hopes to improve the policy development process [PDP].” It seeks to remedy the fact that “metrics requirement for use in policy development are minimally identified in current PDP and WG documentation.”[13]

The Panel recommends that such research initiatives not be confined to the GNSO PDP, but be applied more generally throughout ICANN, for example to the work of other SO/AC structures, to the work of ICANN’s Global Stakeholder Engagement department, to defining and evaluating work in the “public interest,” and to the work of ICANN Strategy.

Using a systematic approach to research (one that incorporates feedback in decision-making processes where there are ongoing, open mechanisms to determine whether and how actions are taken in the “public interest”), ICANN could institute a research function to oversee and/or execute on the following in this context:

- Provide Perspective

- Produce clear summary reports, which explain various aspects and divisions of ICANN’s policy-development work in audience-specific ways.

- Material could be developed and curated both for specific audiences (that is, the specific stakes of specific stakeholder groups), and with a view toward audiences that may not organize along ICANN’s SO/AC lines.

- Produce an annual “State of ICANN” Report, written in collaboration with the ICANN community, staff, and wider world, which captures the challenges and concerns (and successes) at given intervals.

- Propose an annual “Inter-Community Action Plan” outlining the various ways SO/ACs could or should collaborate around specific topics and issues. This “Action Plan” would be created with input from other open platforms for participation and collaboration.

- Provide objective data for use by decision-makers in deliberation. Research would identify and design the “knobs” that policy makers use as variables or “leverage points” in policy-development and decision-making processes.

- Reflect upon and document policy-development processes to determine what makes a “good” policy, e.g., whether a policy can translate well into an evaluation matrix. This could be an iterative and time-bound process, documenting both successes and failures in policy-development to identify areas for improvement.

- Produce clear summary reports, which explain various aspects and divisions of ICANN’s policy-development work in audience-specific ways.

- Education and Capacity-Building

- Institute a grant program for identifying, incentivizing, and rewarding individuals who contribute positively to the development of policy development process improvements.

- Combining the existing ICANN fellowship program with lessons learned from United States Presidential Innovation Fellows Program, the Code for America Fellowship, and San Francisco’s Mayor’s Innovation Fellowship program (as well as others), ICANN could help to develop, launch and sponsor a year-long fellowship program aimed at pairing global innovators, researchers and technologists with ICANN community leaders and staff to develop innovative solutions for improving ICANN’s policy development processes and its processes for establishing and reporting on metrics.

- Develop Public Interest Metrics and Indicators

- Foresight initiatives (e.g., those used by the Institute for the Future) could be developed to engage the ICANN community, staff, and wider world in exercises of foresight, which is meant as an effort to build mutual awareness through early engagement, and also to align various stakeholder incentives.

- ICANN could also foster “Open Research Network” engagement initiatives aimed at developing shared understandings of ICANN’s public interest requirements and producing metrics for evaluating whether or not ICANN has met those requirements in various aspects of its work.

Examples & Case Studies – What’s Worked in Practice?

Initiatives Leveraging Different Types of Evidence

Big Data

- Project Narwhal – A technology development project that played an important role in President Obama’s 2012 campaign.[14] Project Narwhal was used for voter organizing and to take voter data (gathered in part during the 2008 campaign) under an umbrella platform that was able to “fuse the multiple identities of the engaged citizen—the online activist, the offline voter, the donor, the volunteer—into a single, unified political profile.”[15] The platform enabled matching separate data repositories to create more nuanced pictures of potential voters to inform campaign strategy.

- Healthcare in the U.S. – Across the United States, big data is increasingly being leveraged in healthcare contexts to better understand patient-doctor relationships and how to improve performance of doctors. Analytic softwares allow hospitals to “compare physician performance based on various issues, such as complications, readmissions and cost measurement.”[16] These initiatives reduce the average costs for patients and also reduce the average length of hospital stays.

Policy-Development Data

- Alliance for Useful Evidence – The Alliance for Useful Evidence “champions the use of evidence in social policy and practice.” It convenes a network of members including government departments, NGOs, businesses, and universities.[17] It develops recommended practices and approaches to rigorous evidence.

- Behavioral Insights Team – The United Kingdom’s Behavioral Insights Team (BIT) is also known as the “Nudge Unit.” Set up in 2010, it works with government departments, charities, NGOs, and private sector organizations. It develops proposals for its partners, applying behavioral economics and psychology to public policy, and tests them empirically to find out what works. For example, BIT has used “a randomised control trial (RCT) to measure how successful different approaches were in encouraging more people to join the Organ Donor Register” and worked with “with [HM Revenue & Customs] to test new forms of reminder letters to increase the rate of tax repayment.”[18]

Sentiment Analysis

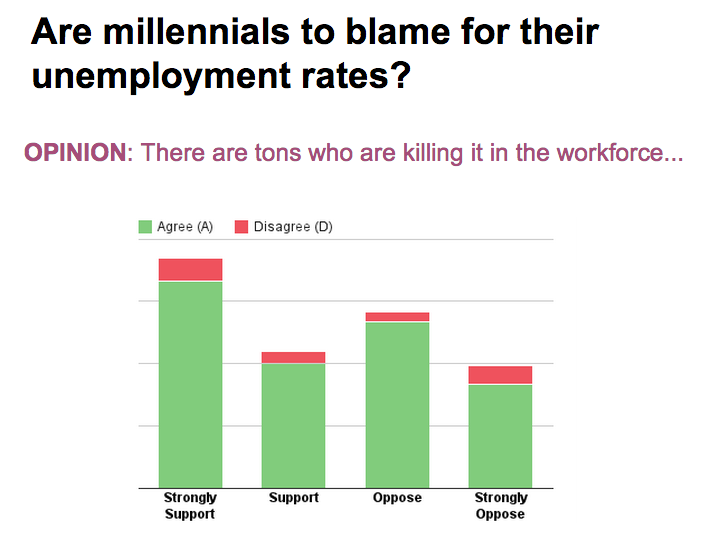

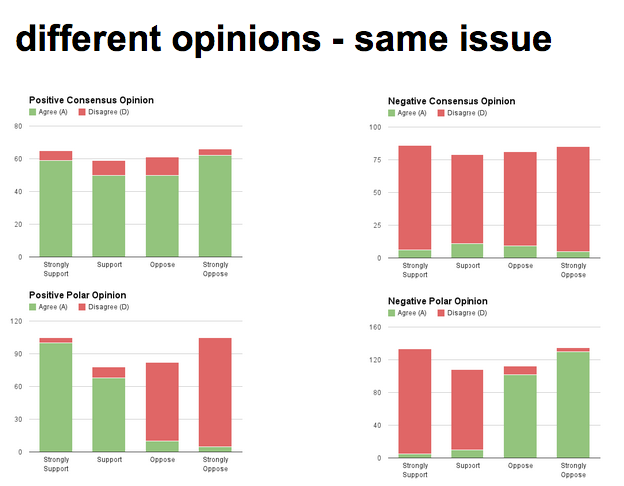

- Agreeble – An emerging social opinion platform that leverages an open survey process to identify polarizing and consensus-driving statements for a given set of issues. It generates and maintains what is called a Semantic Polarity Index (SPI) for each issue, that tells analysts not just how polarized the vote count is, but how much of the conversation around the issue is polarized as well. For example, in the example on the left, voters generally agree with the “Opinion” (“there are tons who are killing it in the workforce”) even where they divide over their support over the question: “are millennials to blame for their unemployment rates?” On the right, several different “polarity schemes” are visualized.

- Agreeble also matches respondents with one another based on their opinion voting patterns. This creates a voter graph that can help analysts identify how voters cluster with one another based on their general agreement/disagreement with each other’s opinions. This has potential to influence or inform how meetings and agendas around the issues are structured. Agreeble is a novel approach to turning people’s sentiments and opinions into useful evidence for framing decisions and discussions.

Institutional Innovation Research “Units”

- Mindlab (Copenhagen, Denmark) – MindLab is a cross-governmental “innovation unit” that is part of three Danish ministries (the Ministry of Business and Growth, the Ministry of Education, and the Ministry of Employment), one municipality (Odense), and collaborates with the Ministry for Economic Affairs and the Interior. Both citizens and businesses team up to research, design and implement “social solutions” in areas such as digital self-service, education, and employment.

- The Studio (Dublin, Ireland) – The Studio is a cross-disciplinary “innovation team” of seven people from the Dublin City Council (DCC). Its aim is to improve the quality of DCC public services “by bringing people together to test new ideas and prototype new ways of working,” for example by using ideas-competitions and by experimenting with city data and open data policies.

- Laboratorio Para La Ciudad (Mexico City, Mexico) – The LabPLC experiments with civic innovation and public administration by acting as a locus for collaboration between government, civil society, business, and the technical/academic communities. The LabPLC sources innovative ideas and intelligent people from many fields and from many places to try and apply their experience, knowledge, and skills to local problems in Mexico City. At the same time, the Lab gives visibility to these projects and thereby builds a repository of good city management practices.

Open Questions – How Can We Bring This Proposal Closer to Implementation?

- How can ICANN turn evidence and data into trustworthy, actionable knowledge to get people engaged?

- For what issues does a “laboratory” strategy have merit? When is a centralized approach is preferred to a decentralized approach to research and evidence-collection and use? Additionally, should a formal research function at ICANN be centralized, e.g., should different stakeholder groups and departments make requests of a central “research team,” or should it be decentralized, e.g. there are researchers belonging to each stakeholder group and/or ICANN department?

- What are the major barriers to ICANN’s stakeholder using evidence? Are there different barriers at the Working Group as compared to the Council or Board level?

- Are there situations that ICANN faces where it makes sense to ignore evidence? What frameworks need to be instituted for evidence and its use to be rigorous?

- How would an ICANN “Research Unit” balance or negotiate its position as a supplier and demander of evidence?

- How could research initiatives convened by or housed in ICANN be useful for larger or other audiences? How can ICANN add value to its research?

1. “Meta-ethnography.” BetterEvaluation.org.

2. Goldacre, Ben, and Torgerson, David. “Test, Learn, Adapt: Developing Public Policy with Randomised Controlled Trials.” UK Cabinet Office with the Behavioral Insights Team. June 14, 2012.

3. Barnett, Aleise, Dembo, David and Verhulst, Stefaan G. “Toward Metrics for Re(imagining) Governance: The Promise and Challenge of Evaluating Innovations in How We Govern.” GovLab Working Paper. v.1. April 18, 2013: 5.

4. Alexander, Danny, and Letwin, Oliver. “What Works: Evidence Centers for Social Policy.” UK Cabinet Office. March, 2013.

5. Barnett, Aleise, Dembo, David and Verhulst, Stefaan G. “Toward Metrics for Re(imagining) Governance: The Promise and Challenge of Evaluating Innovations in How We Govern.” GovLab Working Paper. v.1. April 18, 2013: 5.

6. About Us: “FAQs.” Alliance4UsefulEvidence.org.

7.Gelman, Andrew. “Multilevel (Hierarchical) Modeling: What It Can and Cannot Do.” American Statistical Association and the American Society for Quality “Technometrics”. August, 2006, Vol. 48, No. 3: 432.

8. “Data and Its Uses for Governance.” The GovLab Selected Readings. The GovLab Academy. P. 3.

9. Barnett, Aleise, Dembo, David and Verhulst, Stefaan G. “Toward Metrics for Re(imagining) Governance: The Promise and Challenge of Evaluating Innovations in How We Govern.” GovLab Working Paper. v.1. April 18, 2013: 4.

10. Lanfranco, Sam. “Internet Stakeholders and Internet Governance.” Distributed Knowledge Blog, November 15, 2013.

11. Alexander, Danny, and Letwin, Oliver. “What Works: Evidence Centers for Social Policy.” UK Cabinet Office. March, 2013.

12. Gough D, Oliver S, and Thomas J. “Learning from Research: Systematic Reviews for Informing Policy Decisions: A Quick Guide.” Alliance for Useful Evidence with NESTA, 2013: 5.

13. “Final Issue Report on Uniformity of Reporting.” GNSO.ICANN.org. P. 3.

14. Kolakowski, Nick. “The Billion-Dollar Startup: Inside Obama’s Campaign Tech.” Slashdot. January 9, 2013.

15. Issenberg, Sasha. “Obama’s White Whale.” Slate. February 15, 2012.

16. “Hospitals Prescribe Big Data to Track Doctors at Work.” Physicians News Network. July 17, 2013.

17. “About Us.” Alliance4UsefulEvidence.org; see also “Year One Overview.” Allicance4UsefulEvidence. (June 2013).

18. “Nesta Partners with the Behavioral Insights Team.” NESTA.org.uk. February 4, 2014.